MedTech Intelligence – Read More

The blind spot on the clinical network

Healthcare has spent years hardening email, identity, and endpoints while an enormous attack surface sits at the bedside, in the medium, and in imaging suites. Connected medical devices now do more than deliver therapy or capture data; they also run mainstream operating systems, rely on remote servicing, exchange protected health information, and increasingly participate in workflows that cannot simply be paused when something looks suspicious. That reality makes medical-device risk different from ordinary IT risk. If a workstation fails, productivity drops. If a critical clinical device fails or is manipulated, care can be delayed, degraded, or made unsafe. Yet many organizations still assess device cyber risk with tools built primarily for general IT rather than for clinical environments.

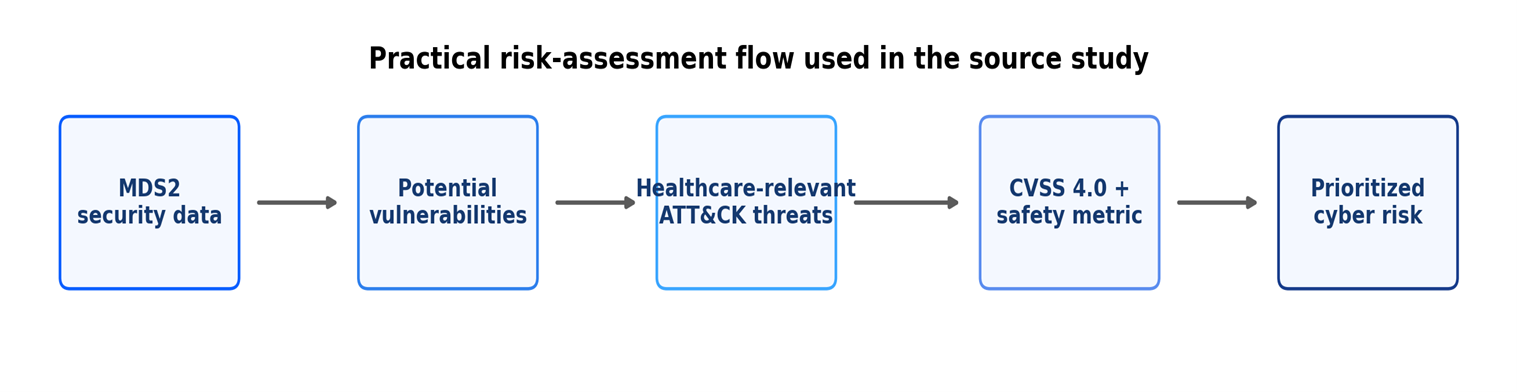

The idea behind this article points to a more practical path. Instead of starting only with abstract frameworks, it starts with something many hospitals already ask vendors to provide during procurement and onboarding: the Manufacturer Disclosure Statement for Medical Device Security, or MDS2. In plain language, MDS2 is a structured security disclosure form. It tells buyers whether a device supports features such as patching, logging, encryption, authentication, hardening, and remote access controls. On its own, that information is useful but incomplete. The analysis of this article is to pair it with MITRE ATT&CK threat intelligence and CVSS 4.0 scoring, including the safety metric. The result is a method designed not just to describe device posture, but to rank where action is most urgent for healthcare delivery organizations.

Figure 1.

Why do current approaches often miss the mark?

That matters because the current state of device risk assessment is still messy. Some approaches rely heavily on published CVEs. Others lean on expert panels, custom rubrics, or long questionnaires. Still others focus more on the enterprise infrastructure around the device than on the device itself. Those methods can be helpful, but they are often slow, expensive, inconsistent, or difficult to keep current. They also tend to treat patient safety as something bolted on later. For industry professionals balancing procurement timelines, vulnerability backlogs, and capital replacement cycles, which is a poor fit. Leaders need a method that is understandable, repeatable, and dynamic enough to evolve with the threat landscape.

The operational challenge is easy to underestimate. Large health systems may manage thousands of connected devices across acute care, ambulatory care, labs, imaging, and home-monitoring environments. Many are long-lived assets. Some are lightly instrumented from a security perspective. Some are shared between departments that use different workflows and escalation paths. In that context, a risk method that depends on convening panels of experts for every asset, or on lengthy custom questionnaires, will struggle to scale. The industry does not just need better scoring. It needs a process that fits the pace and complexity of modern clinical operations.

The study evaluated the approach across six different medical devices from different manufacturers: a defibrillator/monitor/pacemaker, automated dispensing cabinet, vital signs monitor, electrocardiography system, hospital glucose meter, and mobile X-ray device. Together, they represented both Windows- and Linux-based systems and a range of clinical roles. The methodology translated MDS2 responses into potential vulnerabilities, mapped those vulnerabilities against healthcare-relevant ATT&CK techniques, compared the results against STRIDE, and then scored severity using CVSS 4.0. It also used CISA threat-frequency data to calculate technical risk scores. The aim was not to create a perfect universal score. It was to create a faster, healthcare-centered way to prioritize action.

The overlooked asset hiding in plain sight.

One of the clearest findings is that MDS2 deserves far more attention than it typically gets. In many organizations, MDS2 is treated as a procurement form to collect, file, and forget. The study argues the opposite: it can serve as the backbone of a living risk-assessment process. That is significant because MDS2 is already familiar to manufacturers and increasingly expected by health systems. Major organizations such as the VA, Mayo Clinic, Johns Hopkins, and the University of California have all used MDS2 in device review or onboarding processes. In other words, the industry does not need brand-new data standards to get started. It can get more value from a standard it already recognizes.

That point has strategic importance for procurement leaders. The best time to discover that a device lacks strong authentication, patchability, logging, or remote-access constraints is before the purchase order is finalized—not after the equipment is deployed to a critical care unit. MDS2 gives provider organizations a way to ask more informed questions earlier in the lifecycle. Used well, it can improve contract language, segmentation decisions, exception handling, and refresh planning. Used poorly, it becomes another PDF no one opens again. The study’s real provocation is not that hospitals need more forms. It is that they need to operationalize the form they already have.

The research also found that MDS2-based analysis surfaced more issues than CVE lookups alone. Only two of the six devices had published CVEs during the study period, yet the MDS2-driven method identified additional potential vulnerabilities across the device set. That is an important operational point. CVEs are essential, but they are not a complete picture of exposure. A device can lack a public CVE and still present real security concerns because of weak authentication, limited logging, insufficient hardening, incomplete backup capability, or remote-service dependencies. For CISOs, HTM leaders, and security architects, which makes a persuasive case for using disclosure data and design characteristics—not just public vulnerability feeds—to guide prioritization.

Why ATT&CK adds needed realism.

The ATT&CK comparison is equally telling. In aggregate, the ATT&CK-based method mapped 71% of identified device vulnerabilities to relevant threats, compared with 60% using STRIDE. That gap is not enormous, but it is meaningful. STRIDE remains useful as a classic threat-modeling lens, especially in design discussions, yet it is broad by nature. ATT&CK gave the study a more grounded view of how real adversaries target healthcare. Just as important, ATT&CK is updated regularly. That gives security programs a built-in way to refresh assessments as threat activity changes. For an industry facing persistent ransomware pressure, credential abuse, phishing campaigns, and supply-chain concerns, a living threat model is more valuable than a static one.

That difference also matters for credibility with executive leadership. A risk argument built on abstract categories often sounds theoretical. A risk argument tied to techniques used by healthcare-targeting adversaries sounds operational. Boards, audit committees, and executive teams increasingly want to know not only whether a control gap exists, but whether it aligns with realistic attack behavior. ATT&CK helps answer that question. It narrows the conversation from “what could happen in theory” to “what attackers actually do.” That shift makes prioritization easier to defend when budgets and staffing are constrained.

The study’s risk-score findings reinforce that point. Among the six devices reviewed, the defibrillator/monitor/pacemaker produced the lowest cumulative technical risk score at 4.78, while the hospital glucose meter produced the highest at 9.48. The most important driver across devices was not exotic zero-day behavior. It was initial access, especially valid accounts. That should sound familiar to every healthcare defender. Credential abuse remains one of the most reliable ways into a network and, by extension, into device ecosystems that depend on identity, remote access, and default or shared account practices. For industry readers, the implication is practical: device cybersecurity is not only a biomedical engineering issue. It is also an identity, access, and operational-discipline issue.

Figure 2.

The patient-safety multiplier

Perhaps the most important business insight, however, is what happened when patient safety was treated as part of the scoring logic rather than as a separate conversation. Using CVSS 4.0’s optional safety metric, the study showed that several vulnerabilities became more urgent when potential patient impact was considered. Across the six devices, 29 of 95 total vulnerabilities were flagged as having patient-safety implications. The hospital glucose meter had the highest share, with 9 of 17 vulnerabilities carrying a safety flag. The defibrillator/monitor/pacemaker followed with 5 of 12. That kind of visibility changes the discussion in the boardroom and the procurement committee. A security issue is no longer just a control gap; it can also be a care-delivery risk.

These reframing matters because healthcare organizations often struggle to align cybersecurity and clinical engineering priorities. Traditional IT scoring can miss what clinicians intuitively understand: not every asset failure is equal. A compromise affecting a low-acuity administrative system is serious, but a compromise affecting a device involved in medication management, monitoring, or emergency response carries a different operational and ethical weight. By incorporating safety into severity scoring, the study gives leaders a more defensible way to allocate scarce resources. It helps answer a question that comes up in every mature program: what should we fix first when everything feels urgent?

It also changes the language of investment. When security teams talk only about malware, patching, and exploitability, they may struggle to compete with other operational priorities. When they can tie a vulnerability to therapy disruption, unsafe monitoring, or downstream patient-harm scenarios, the conversation becomes easier for nontechnical leaders to understand. That does not mean every vulnerability becomes a crisis. It means the organization gains a clearer way to distinguish routine cyber hygiene from clinically consequential risk. In a sector where margins are tight and downtime is costly, that kind of clarity has significant business value.

Source-derived device summary.

Figure 3.

What industry professionals should do next?

The findings translate into a practical near-term playbook.

- First, treat MDS2 as operational data, not paperwork. Build it into procurement, onboarding, segmentation, vulnerability management, and periodic review.

- Second, refresh device risk models with current ATT&CK intelligence rather than relying only on static threat lists.

- Third, make CVSS 4.0 with the safety metric part of the discussion for relevant devices, especially those used in high-acuity settings or tied directly to therapy and monitoring.

- Fourth, scrutinize authentication, account management, remote servicing, and patch pathways. The study repeatedly points back to these basics because attackers do too.

- Finally, make HTM, security, infrastructure, and clinical stakeholders’ co-owners of the process. Medical-device cyber risk lives at the seams between teams.

For many organizations, the right first move is not a moonshot program. It is a disciplined 12-month operating plan. Start by standardizing how MDS2 documents are collected, reviewed, and stored. Establish a minimum set of decision points for every new device: identity model, logging capability, patch route, remote-access controls, segmentation requirements, and documented exceptions. Then identify a limited set of device classes where safety-aware scoring will be used consistently, such as medication, monitoring, and life-support adjacent systems. Minor changes in process can produce outsized gains if they improve consistency and reduce ad hoc decision-making.

Manufacturers also have a role to play. The study notes that MDS2 remains voluntary, which is a limitation. Still, market pressure is moving the field. Vendors that provide current, complete disclosures make life easier for provider organizations and strengthen their competitive position. In practice, which means timely updates after substantial device changes, clearer documentation of hardening and third-party components, and transparent answers about patching, remote access, and authentication. As more health systems formalize device-security review, better disclosures will increasingly become part of doing business—not a nice-to-have. None of this means the methodology is perfect, but industry professionals do not need perfection to benefit from the lesson. They need a process that is better than today’s patchwork of forms, spreadsheets, and one-off judgments. On that measure, this work is compelling.

A better operating model for connected care

The broader message is that healthcare can no longer afford to separate cyber resilience from patient safety, or device management from enterprise security. Connected devices sit inside the clinical mission. That means risk assessment has to be fast enough for operations, specific enough for procurement, and credible enough for leadership. By connecting vendor disclosures, real-world adversary behavior, and safety-aware scoring, this research moves the conversation in that direction. For hospitals and manufacturers alike, the next phase of medical-device cybersecurity will not be won by more paperwork. It will be won by turning the right data into action.

Selected references

- Manufacturer Disclosure Statement for Medical Device Security. (2019). National Electrical Manufacturers Association (NEMA).

- MITRE ATT&CK. MITRE Corporation. https://attack.mitre.org/

- Common Vulnerability Scoring System Version 4.0: Specification Document Version 1.1. FIRST. https://www.first.org/cvss/v4.0/specification-document

- CISA Analysis: Fiscal Year 2023 Risk and Vulnerability Assessments. Cybersecurity and Infrastructure Security Agency.

- (2023). Multi-Source Analysis of Top MITRE ATT&CK Techniques.

Disclaimer: The views expressed in the article are those of the authors and not of the organizations they represent.

The post The Medical Device Cybersecurity Gap Hiding in Plain Sight appeared first on MedTech Intelligence.