HIT Consultant – Read More

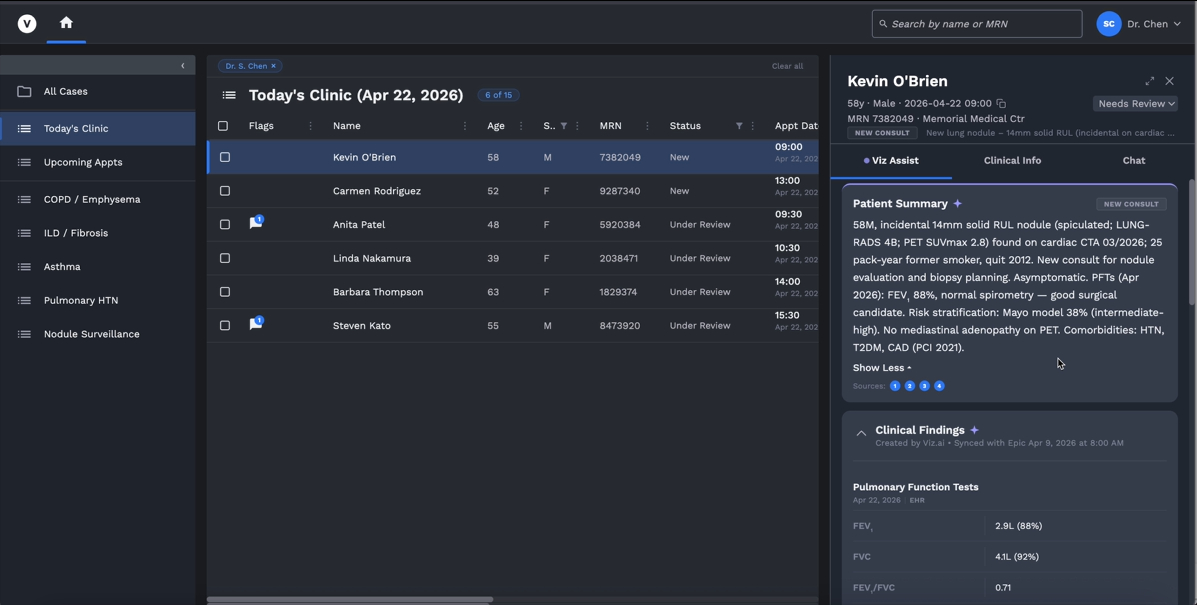

AI adoption is rapidly growing in healthcare across everything from clinical documentation to diagnostic imaging, revenue cycle management, and patient engagement. As per the 2023-24 American Hospital Association Information Technology Supplement, predictive AI integrated with EHR systems were already used in 71% of hospitals; this has increased rapidly with the advent of generative AI.

However, many AI deployments tend to fail in the real world, and do not deliver the expected improvements in clinical value and operational efficiency. This is due to a growing disconnect between how these AI systems are evaluated and how they perform in the real world. Most evaluations rely on basic machine learning metrics (AUROC, F1 scores, AUPRC) that measure accuracy, precision, and recall. However, accuracy measured in retrospect is necessary but not sufficient for real-world deployments; evaluations should also ensure that the AI models are safe, fair, properly calibrated, workflow-compatible, and operationally reliable when humans interact with them.

Various studies have highlighted this gap. Bedi, Liu, Orr-Ewing et al found that most evaluation studies (95.4%) primarily focused on accuracy, but fairness, bias, and toxicity (15.8%), deployment considerations (4.6%), and calibration and uncertainty (1.2%) were infrequently measured. Further, only 5% of the trials studied used real patient care data for evaluation. A recent study by the University of Minnesota also found that less than half of the US hospitals using AI-assisted predictive tools measured them for bias. The risks associated with such models is huge – Jabbour, Fohey, Sheppard et al found that diagnostic accuracy worsened by 11.3% when clinicians were shown biased AI model predictions.

Why accuracy is not enough

There are multiple reasons accuracy-focused evaluation fails in the real world.

First, accuracy can hide poor calibration and uncertainty. Most accuracy measures test relative ranking – for example, ranking which of a pair of patients are higher risk, or which of two claims are more likely to be denied. However, most healthcare decisions depend on thresholds and absolute values – for example, identifying whether a patient’s risk is sufficient to trigger intervention. Consequently, calibration and uncertainty are additional crucial measures that identify the usability of a model’s prediction for clinical or operational use cases.

Second, different healthcare environments vary by case mix, EHR configuration, workflows, patient demographics, and several other characteristics. Consequently, basic external validation is insufficient and can only represent a snapshot-in-time measure; continuous evaluation across the AI lifecycle is needed instead.

Third, average performance or accuracy measurements can hide variances for different subgroups. A model can perform well overall, but still fail for rare diseases or presentations, minority subgroups, or any categories that are under-represented in the training dataset underlying the model. Any evaluation should report both average and subgroup-specific performance to prevent unfairness, bias, or toxicity; further, the list of subgroups analyzed should be as comprehensive as possible.

Fourth, there are multiple operational failures where the implementation layer breaks even if the models are statistically accurate. This could be due to stale or incorrect data, wrong context mapping, lagging data feeds, incorrect routing, or even downtime; all these issues reduce model reliability and have clinical and operational consequences.

Finally, most measures only evaluate the performance of AI, but not of the entire system that includes humans interacting with AI. Users may over- or under-trust AI outputs, and behavioral changes creep in once AI solutions are deployed. To truly measure efficacy, safety, and reliability, the human-plus-AI team should be evaluated rather than just the model. Morey, Rayo, and Woods demonstrated that measuring AI capabilities alone does not guarantee safety and effectiveness of joint human-AI deployments.

A playbook for true evaluation

The spine of a comprehensive healthcare AI evaluation framework remains measures of technical and statistical validity. However, these should be comprehensive and measure ranking (such as AUROC, F1), calibration, uncertainty, as well as sensitivity and specificity.

The framework should also ensure measurement of subgroup-level performance. To clearly test real-world performance, temporal validation, where models are tested on previously unseen data (distinct from the dataset the model was trained on) should be conducted. Similarly, the model should be tested on local datasets, specific to the institution and use case being deployed for.

Another crucial, necessary step before full deployment is silent trial evaluation. Before full deployment, models should be run in live or near-live environments without affecting care or operations; predictions made are then compared against observed outcomes to measure reliability across the entire human-plus-AI unit in real-world usage. This helps identify statistical, operational, and behavioral risks and failure modes before the model is deployed. Recent research from Tikhomirov, Semmler, Prizant, et al highlighted the importance of silent trials, but also pointed out its low usage in actual deployments.

In such evaluations, human factors should also be measured – response latency, AI-suggestion acceptance and override rates, workload effects, and trust in the system. These measurements should test impact on outcomes, and not just on the specific tasks being performed; to do so requires separating model efficacy and implementation efficacy. However, this is a necessary step to ensure AI drives improvements in standard of care, claims processing accuracy, and other key healthcare measures.

Finally, it is crucial to ensure continuous post-deployment monitoring. Healthcare data shift is constant – seasonal disease patterns, staffing turnovers, coding changes, new devices, systems, or workflows all cause changes. Continuous monitoring should test for feature, performance, calibration drift both for the entire population and for specific subgroups; any variations should be carefully investigated.

Conclusion

The healthcare industry currently talks about AI as if models fail mainly because they are inaccurate. In practice, many models are already reasonably accurate; real-world failures are caused due to ineffective calibration, poor localization, weak monitoring, poor integrations, and other similar factors. Until evaluation frameworks reflect the realities of the environments they are deployed in – workflow complexity, human behavior, data instability, and system risks – healthcare AI deployments will lack the reliability needed to truly deliver consistent clinical value and outcomes.

About Vikram Venkat

Vikram Venkat is a Principal at Cota Capital, an early-stage venture capital firm where he invests across healthcare and AI. Vikram has earlier worked in healthcare and AI as a consultant at the Boston Consulting Group, and across three other venture capital firms.